I’ve been meaning to write this post since I started this blog, but my laziness isn’t a bad thing! (this time) Due to my procrastination, I now have more things to write about!

DingTo (Thomas Dinges) is one of the developers focused mainly on Cycles. He’s busy doing GSoC and has added a whole handful of great new features. Here’s a couple of my favorites:

Wavelength and Blackbody nodes

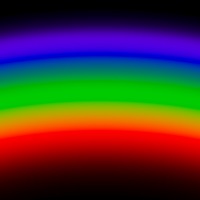

These two nodes allow you to input physical values to get a colour. The wavelength is the full spectrum of colours. Plug a gradient texture into it with a few math nodes to get the 0-1 range to a 380-780 range and you get a physically accurate rainbow!

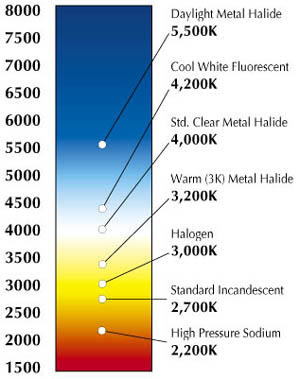

The Blackbody node on the other hand allows you to input a colour temperature to get the right RGB colour. This is useful if you know the exact colour temperature of a specific light bulb, or get that lame looking candle of yours to look right!

from venturelighting.com

Separate/Combine HSV

I’ve been asking for this one for a damn long time ;) I asked DingTo for it at least a couple times and ranted about the lack of it at least once in #blendercoders.

Before all we had was Separate/Combine RGB, but many artists like myself don’t work in RGB much and can visualize colours much better as Hue (what colour), Saturation (the richness of the colour) and Value (brightness).

Why do we need this? Basically to make easier colour adjustments to textures. I generally use nodes as a sort of dynamic photoshopping in three dimensions, getting a bunch of unrelated textures (like rocks, grass and baked occlusion) to create a completely different material (like metal).

Non-Progressive Integrator for GPU

I’ve seen some good results from the non-progressive integrator, but since it was CPU-only I never really took it seriously since CPU rendering is too slow to work with.

Basically, instead of having one control for the number of samples to do, it gives you the ability to choose how many of what kind of sample to do for each iteration. So you can choose to have more diffuse samples than glossy since it usually takes less than 10 glossy samples to clean sharp reflections, but diffuse needs a lot more.

So depending on your scene and your setup, you’ll get cleaner renders in shorter time.

I haven’t tried it out fully yet, so I’ll leave any further elaboration to another post.

Ray Depth

The Ray Depth output of the Light Path node basically gives you control over what happens on each light bounce. You can use it on lamps to control the number of light bounces per lamp, or only have a single light bounce for transmission rays to eliminate that kind of caustics (though keeping reflection caustics). Or you could use it in materials, making a glossy material act like a diffuse one after a couple bounces so that it doesn’t give any fireflies but still adds a little colour bleeding. Use your imagination :)

The only downfall is that this is purely visual and doesn’t actually reduce render time at all. It might reduce some noise in the case of caustics, but it still calculates the same number of bounces for each ray even if you have the emission shader become a holdout shader after a few bounces. So use it for artistic purposes, not to optimize render time (unless in the caustics case).

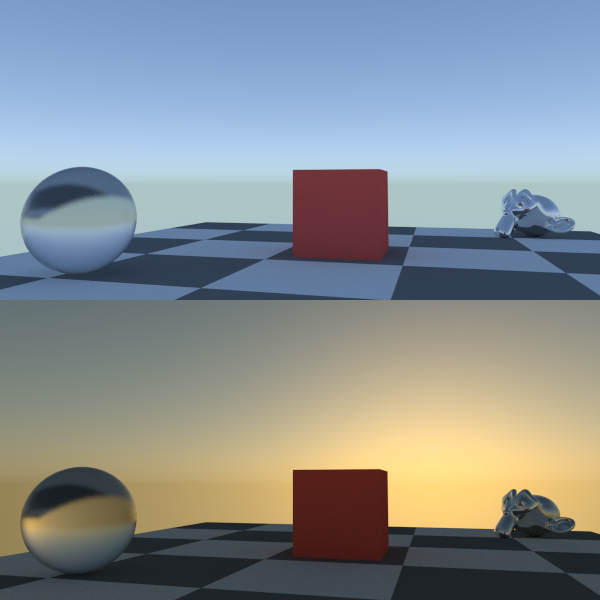

Sky Model

Added just yesterday, this is a much more realistic algorithm for the sky texture node, especially when it comes to sunsets.

All the features except the Sky Model are already in trunk, so get a build from graphicall.org or builder.blender.org to use them :)

Hi greg, great blog.

I would appreciate if you explain what new vector transform node is used for.

Best regards