Kévin Dietrich has been working on something I didn’t even know was possible: motion blur support for meshes that have a changing number of vertices, like fluid simulations.

Category Archives: Cycles

Denoising in Cycles Coming Soon

Model by 1DInc

Lukas Stockner has been working on yet another awesome Cycles feature, this time a sneaky method to reduce noise without actually rendering more samples.

Read more and download a Windows build from the BA thread.

To put it simply, “denoising” is a process of analysing your render and trying to shmoosh the noise/grain together and make it look clean.

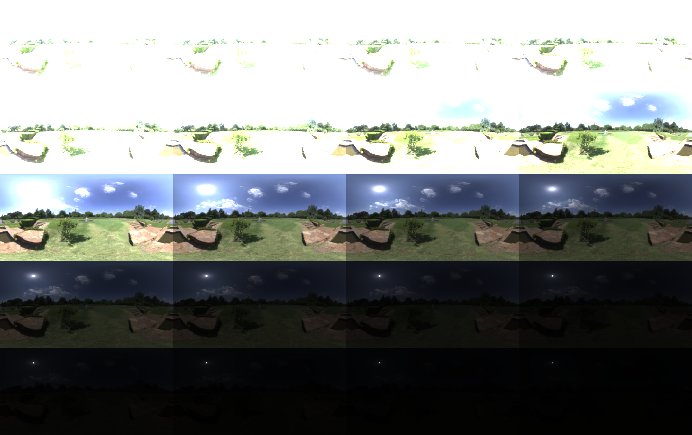

What Makes a Good HDRI and How to Use It Correctly

HDRIs are everywhere these days. If you’ve got a half-decent camera, a tripod and some software you can even make them yourself.

But just like creating art in Blender, being able to do it at all is not the same as being able to do it well.

So, after I created my first crappy HDRI and discovered how challenging it could be, I decided to embark on a quest. I wanted to create the perfect high dynamic range environment map that would give you perfectly accurate and realistic lighting as if you had teleported your CG scene to the actual location of the photo itself.

In truth, this is an unending quest, but I’ve made some fair progress over the years. So without further ado, let me explain…

What Makes a Good HDRI

Just like art, the quality of an HDRI can be a subjective thing, but I think we can all agree that there are a few fundamental attributes that define (although not exclusively) how useful or accurate an HDRI is.

Dynamic Range

Let’s begin with what is, to me, the most important aspect of any HDR image that you intend to use for lighting.

Rolling Shutter Coming to Cycles

Sergey is working on another cool motion-blur-related(ish) feature: The rolling shutter effect, which you find in most photo and video cameras these days.

This is presumably to help with integrating renders with fast moving camera footage, but of course you can use it for whatever crazy purpose you desire ;)

It’s not in master just yet, but there’s a solid patch awaiting review:

This is an attempt to emulate real CMOS cameras which reads sensor by scanlines and hence different scanlines are sampled at a different moment in time, which causes so called rolling shutter effect. This effect will, for example, make vertical straight lines being curved when doing horizontal camera pan.

Sergey did a quick render test to demonstrate it a bit more clearly:

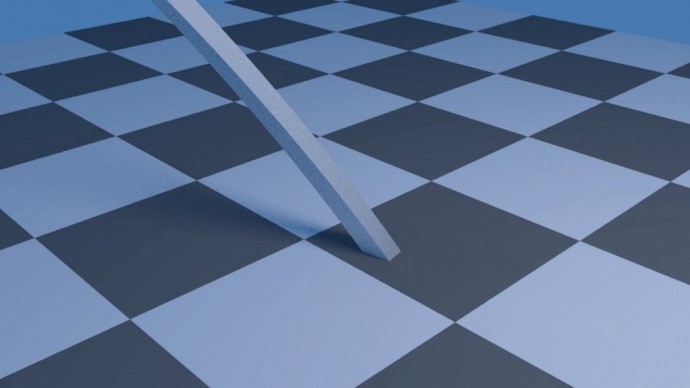

New Pixel Filter Type: Blackman-Harris

A new type of pixel filter called “Blackman-Harris” has been added to Cycles by Lukas Stockner to compliment the existing Gaussian and Box filters.

From the commit:

This commit adds the Blackman-Harris windows function as a pixel filter to Cycles. On some cases, such as wireframes or high-frequency textures, Blackman-Harris can give subtle but noticable improvements over the Gaussian window.

See also: The initial patch with some discussions.

What is a pixel filter you ask? As I understand it, it’s basically the function used to apply anti-aliasing in your render. Different filters use slightly different math and methods to calculate how each pixel appears relative to its neighboring pixels, which makes edges look smoother.

The difference between the three types is most noticable on thin details like wires:

New Custom Motion Blur Curves

A couple of weeks ago, Sergey implemented the ability to control the exact appearance of motion blur streaks by using a simple curve widget in the render settings:

From the initial patch:

From the initial patch:

Previously shutter was instantly opening, staying opened for the shutter time period of time and then instantly closing. This isn’t quite how real cameras are working, where shutter is opening with some curve. Now it is possible to define user curve for how much shutter is opened across the sampling period of time.

This could be used for example to make motion blur trails softer.

Shutter curve now can be controlled using curve mapping widget in the motion blur panel in Render buttons. Only mapping from 0..1 by x axis are allowed, Y values will be normalized to fill in 0..1 space as well automatically.

Y values of 0 means fully closed shutter, Y values of 1 means fully opened shutter.